Kubernetes (k8s) makes the management and deployment of containers much easier. However, maintaining the platform can be complicated and expensive so many companies opt to use managed Kubernetes services. This article provides an overview of Kubernetes as a Service (KaaS) and compares two of the top providers: Google Kubernetes Engine (GKE) and Amazon Elastic Kubernetes Service (EKS).

What Is Kubernetes?

Kubernetes is an open-source container management system. It enables you to automate the deployment, management, and scaling of containerized applications. Released by Google in 2015, it quickly became the standard for container orchestration. You can learn more about Kubernetes architecture and features by checking the Kubernetes documentation.

Some of the benefits of Kubernetes include:

– Portability

You can deploy Kubernetes in almost any infrastructure. You can use it in the cloud, on-premises, with virtual machines, and with hybrid environments. Kubernetes is vendor agnostic, so you don’t have to worry about vendor lock-in.

– Scalability

Kubernetes enables you to scale workloads horizontally by simply adding more pods. Another useful feature is auto-scaling. You can set k8s to automatically change the number of pods to adapt to CPU utilization.

– High Availability

The Kubernetes platform offers features such as self-healing to ensure high availability. Monitoring agents constantly check the health of pods and containers, removing and replacing unhealthy ones as needed. Load balancing features also help by distributing workloads across pods.

What Is Kubernetes as a Service?

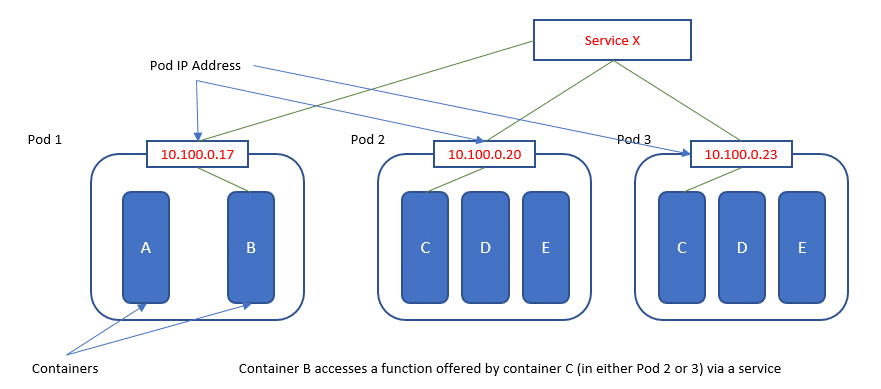

To understand what Kubernetes as a Service is you need to first understand how pods work. A pod is a collection of containers sharing the same resources, as well as the same operating system and network IP. You can redistribute, scale up or down pods according to your needs. However, pods can fail so you cannot rely on pods alone to provide long-term stability.

KaaS is a method to organize pods and the policies to access them. It is also called a microservice. You have different types of KaaS pod options:

- Cluster IP— connects and organizes the pods within a cluster.

- NodePort—delivers pods to external traffic through a port on each node of the cluster.

- Load Balancer—balances traffic loads while also delivering pods to external traffic.

A Few Considerations Before Implementing KaaS

Installing and configuring Kubernetes is not an easy task. Teams preparing to use Kubernetes as a Service should keep these considerations in mind:

- Reliability —you need to provide persistent cloud storage to avoid common issues with resources. Kubernetes clusters sometimes fail when just built, so you will need to monitor for network hiccups.

- Security —it is important to tighten access security by granting users permissions on a need to use basis. Segmenting your network can provide added security.

- Scalability —although k8s enables you to rapidly scale workloads, you should monitor the process. Unchecked scaling can lead to higher resource costs than you intend.

Comparison of EKS vs GKE

EKS and GKE are two of the top k8s managed services providers. Many of their features are similar or the same. Only features that are managed differently between both providers are highlighted below.

Amazon Elastic Kubernetes Service

Amazon EKS was first made available in June 2018. It runs control plane instances across AWS Availability Zones. EKS monitors plan instances, providing automatic upgrades and patching. Some of the features of EKS include:

– Manual Updating

AWS does not provide automatic updates, only on-demand. To update nodes, you need to do so manually through the command-line interface.

– Resource Monitoring

AWS relies on third-party providers to offer integrated monitoring solutions.

– Availability

EKS is only available in the US, Europe, and Asia. It is not available in Latin America, Oceania or Africa.

– High Availability Clusters

Your master nodes can be spread over more than one Availability Zone. This ensures the cluster remains available if a node fails. EKS does not provide full support for worker nodes.

– Auto-Scaling

AWS offers auto-scaling but requires some manual configuration.

Google Kubernetes Engine

Since Google is the creator of Kubernetes, it’s logical that GKE was the first KaaS available on the market. This service automates container management by scheduling them into a cluster according to pre-defined parameters. Some of the features of GKE include:

– Automatic Updating

GKE provides automated updates for your clusters. It requires no command lines and no manual updating.

– Resource Monitoring

GKE has a built-in monitoring platform, called Stackdriver. You can use this tool for integrated logging as well.

– Availability

GKE covers the US, Europe, Asia, Oceania, and Latin America. It is still not available in Africa.

– High Availability Clusters

GKE offers master replication over more than one Availability Zone. Unlike AWS, GKE supports worker nodes.

– Auto-Scaling

GKE provides almost full automation of scaling. The user only needs to select the virtual machine size and the minimum-maximum range of nodes on the node pool. GKE then follows through with these specifications.

– Bare Metal Clusters

You can use GKE on-premises via a vSphere cluster. You can find more information about how to install GKE on-prem in the GKE documentation.

Open-E JovianDSS with Kubernetes

By using Open-E JovianDSS, you are capable of building your own Kubernetes-based ecosystem. In this case, Open-E JovianDSS can be utilized as a storage backend solution by using the CSI driver. With the provided features, the data will be fully secured and the management of containers will be much easier in this way.

Which One Is Better?

Kubernetes is gaining popularity as it becomes easier to deploy on a platform as a service solution. Both Google Cloud and AWS are among the top cloud vendors available with GKE being a more mature solution. However, according to a 2019 State of the Cloud Survey, there is a marked increase in interest of EKS, which has a 44% adoption. Other surveys show that for a single option usage, GKE is the winner with 39% adoption vs EKS 35%.

Despite the functionality and popularity of both choices, at the end of the day, the KaaS you choose depends on your workload requirements and goals. The popularity of an option is only important once you know that the solution can meet your needs.

Leave a Comment